Last week Mickael and I met up with developers from Arius3D. They specialize in 3D imaging, which includes scanning 3D objects, displaying them on screens and printing them out in 3D. They have a free ActiveX plug-in called 3DImageSuite for IE which allows users to view the 3D objects.

The problem is users are limited to using one browser to view this content. 3DImageSuite is also only available for Windows. So even though IE “dominates” the browser world, Arius3D are limiting their non-IE users.

Because of our experience developing Javascript libraries which specialize in 3D rendering, we have partnered up with them to solve this problem. We’ll be using WebGL to do the rendering. Not just because it’s what we specialize in, but also because it’s becoming a standard. Once WebGL is fully implemented in the other browsers and we complete this technology, their content will be rendered cleanly without any add-ons. It will be IE users who will be at a disadvantage when trying to view this content.

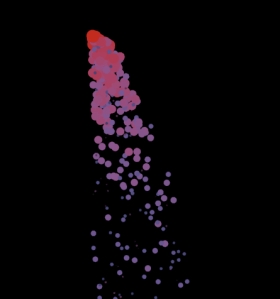

3DImageSuite displays point clouds which consists of XYZ and RGB data. So the problem sounds trivial, especially since we already have the capacity to render 3D points in both C3DL and Processing.js. The real problem is the 3D scans are very dense, they are composed of millions of points. Trying to render this huge amount of data cannot be done in either of our libraries practically.

To get started on this project, I began reviewing my meeting notes with the Arius3D developers and started brainstorming for ideas and thoughts on the solution.

• One of the first things I thought about was allowing the user to control the level of detail. This can be extremely valuable user input. If the user doesn’t require 100% detail, there is not point in wasting resources downloading and rendering it. This can be the case when they are on a limited mobile platform.

• We are in the process of trying to speed up Processing.js by making the browser do a JIT on the Javascript. This will allow the JS to run native C++ speed. We’ll be trying to place as much processing as we can on the GPU and CPU.

• Use compression on server side and decompress data on the client side to reduce download times.

• Implement some form of spatial partitioning, such as an octree to cull splats.

• Stream the data so lower quality ‘versions’ of a model can be displayed quickly and progressively gain quality.

• Use static rendering. That is, render the model only once and not in ‘real-time’. If the user rotates the object, render a low-quality version while the user is rotating it. Once the user stops, re-render 100% of the object. I’ve seen this done before, but I forgot about it. Luckily someone reminded me at OCE. Thanks

• Because self-shadows is something which Arius3D is considering, we may need to use raytracing instead of simple Blinn-Phong shaders.

These are just some of the initial thoughts I’ve had along with ideas from the developers. I’ve begun some research on surface splatting and spatial partitioning and the problem does seem very interesting and exciting. I think more research, development and talks with the developers will lead us in the right direction.